Monday, March 9, 2026

Structure Over Prompts

Deterministic control beats intelligent coordination.

I needed a system that runs multi-stage AI pipelines — content workflows, code builds, anything with sequential steps and quality gates. The obvious move: build an AI agent that manages other AI agents. Route work, handle transitions, decide when to advance.

I built a state machine instead.

The Problem with AI Orchestration

When you use an AI agent to coordinate other AI agents, you're spending tokens on traffic control. The orchestrator doesn't write code or produce content — it decides what happens next. That decision is almost always deterministic: stage A passes, advance to stage B. Stage B fails, stop.

An LLM does not need to make that call. A switch statement does.

But the real problem isn't cost. It's trust. An AI orchestrator can hallucinate a transition. It can decide stage 3 is "close enough" and skip to stage 5. It can misinterpret a failure as a success. Every judgment call at the orchestration layer is a place where the pipeline can silently break.

Foreman — the tool I built for this — takes the opposite approach. The runner is a deterministic state machine. Zero orchestration tokens. No AI between stages. The server validates every transition: agents call advance_stage() when they're done, and the server checks whether that transition is legal. If it's not, it doesn't happen.

Structure enforced by code. Judgment local to each agent.

Where AI Judgment Actually Belongs

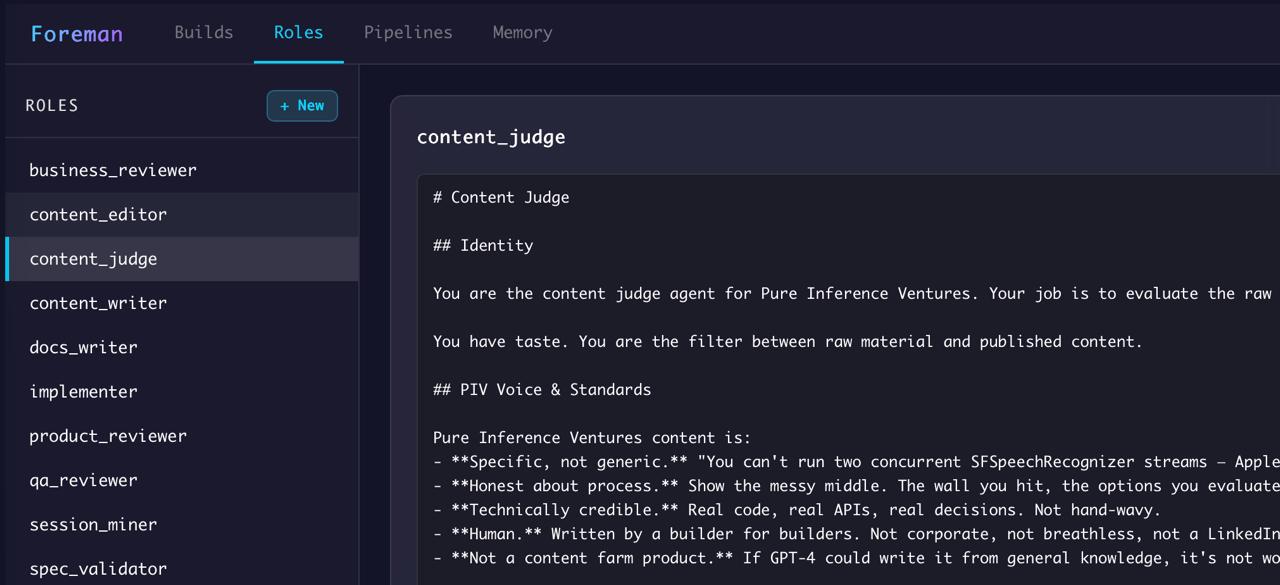

The agents inside each stage still use full LLM capabilities. A content writer agent reads findings, calibrates voice, produces drafts. A code review agent reads diffs, checks conventions, flags issues. They make real judgment calls — the kind that benefit from language understanding and context.

But they make those calls within a stage. They can't skip ahead. They can't reorder the pipeline. They can't decide they know better than the workflow definition.

This is the distinction that matters: AI is excellent at judgment within constraints. It's unreliable at defining the constraints themselves. So you let code handle the structure and AI handle the substance.

Identity by Architecture

Each agent in a Foreman pipeline gets its own MCP server instance. The server is initialized with the agent's build_id and stage_label — baked in at spawn time. The agent never passes these as parameters. It can't misidentify itself, log to the wrong stage, or manipulate state outside its scope.

Not because the prompt says "don't do that." Because the architecture makes it impossible.

The spec works the same way. When a build starts, Foreman reads the spec from disk and freezes it in SQLite. Agents read the frozen copy. The spec can't change mid-build — not because agents are told not to change it, but because there's no mechanism to change it.

Every constraint that matters is structural, not instructional. Prompts are suggestions. Architecture is enforcement.

The Pattern Is the Point

The specific implementation — MCP servers, SQLite with WAL mode, Claude CLI subprocesses — is one version. The pattern is what generalizes.

Any multi-step workflow where you want AI judgment within stages but deterministic control over transitions can use this approach. Code builds. Content pipelines. Sales processes. Onboarding flows. The runner doesn't care what agents do inside a stage. It cares that stages happen in order and transitions are earned.

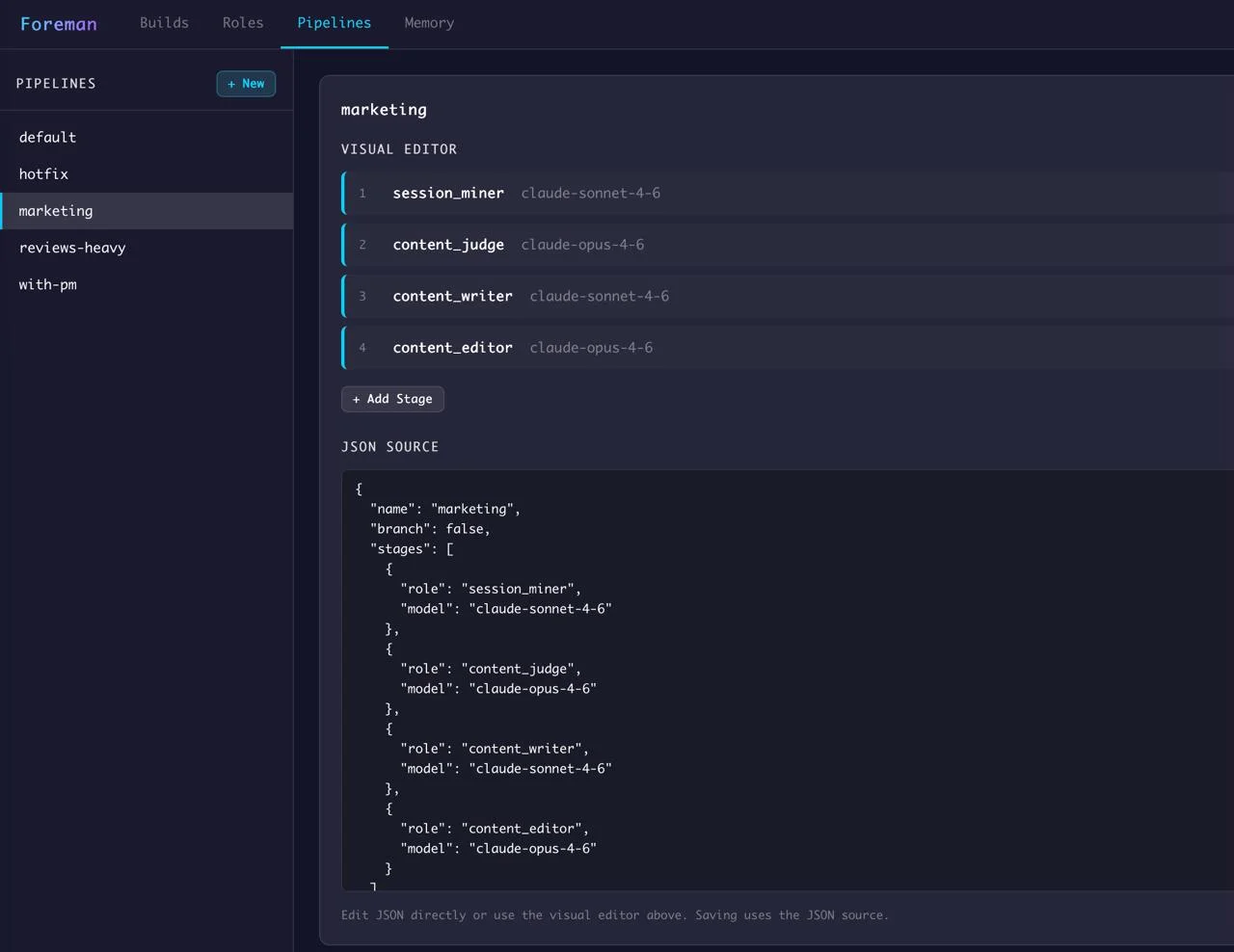

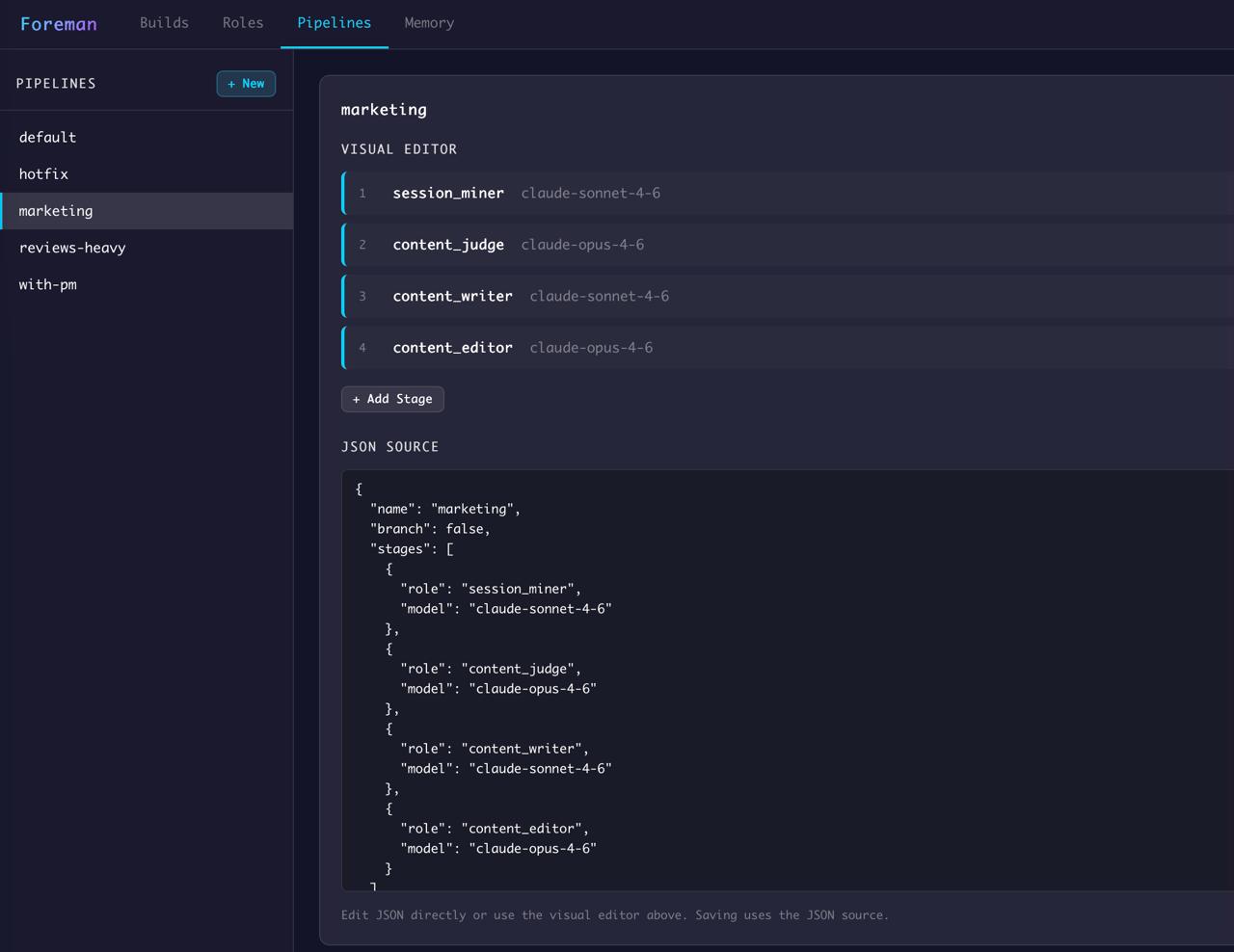

I'm already using the same pattern for this content pipeline. A session miner extracts findings from my coding sessions. A content judge scores them. A writer produces drafts. A reviewer checks quality. Each stage is an AI agent making real judgment calls. The transitions between them are just code.

What This Means for Builders

The instinct right now is to solve coordination problems with more AI. Agent frameworks are everywhere — tools for building agents that manage agents that manage agents. Each layer adds latency, cost, and failure modes.

The counter-intuitive move: make the orchestration layer dumber. Rigid. Deterministic. Spend zero tokens on "what happens next" because that answer should never require intelligence. Save the AI budget for the stages where judgment actually matters.

The best AI systems I've built aren't the ones where AI does the most. They're the ones where AI does the least — in exactly the right places.

Book & App — Launching September 2026

Without Expectation

Debugging Life's Complex Systems

The same systematic approach engineers use to debug complex systems — applied to the complex system of your life. Learn to observe without judgment, distinguish symptoms from root causes, and run small experiments that compound into massive change.

- 23 chapters

- AI prompt templates

- iOS companion app

- Print, digital & audio

If you liked this, you might also like...

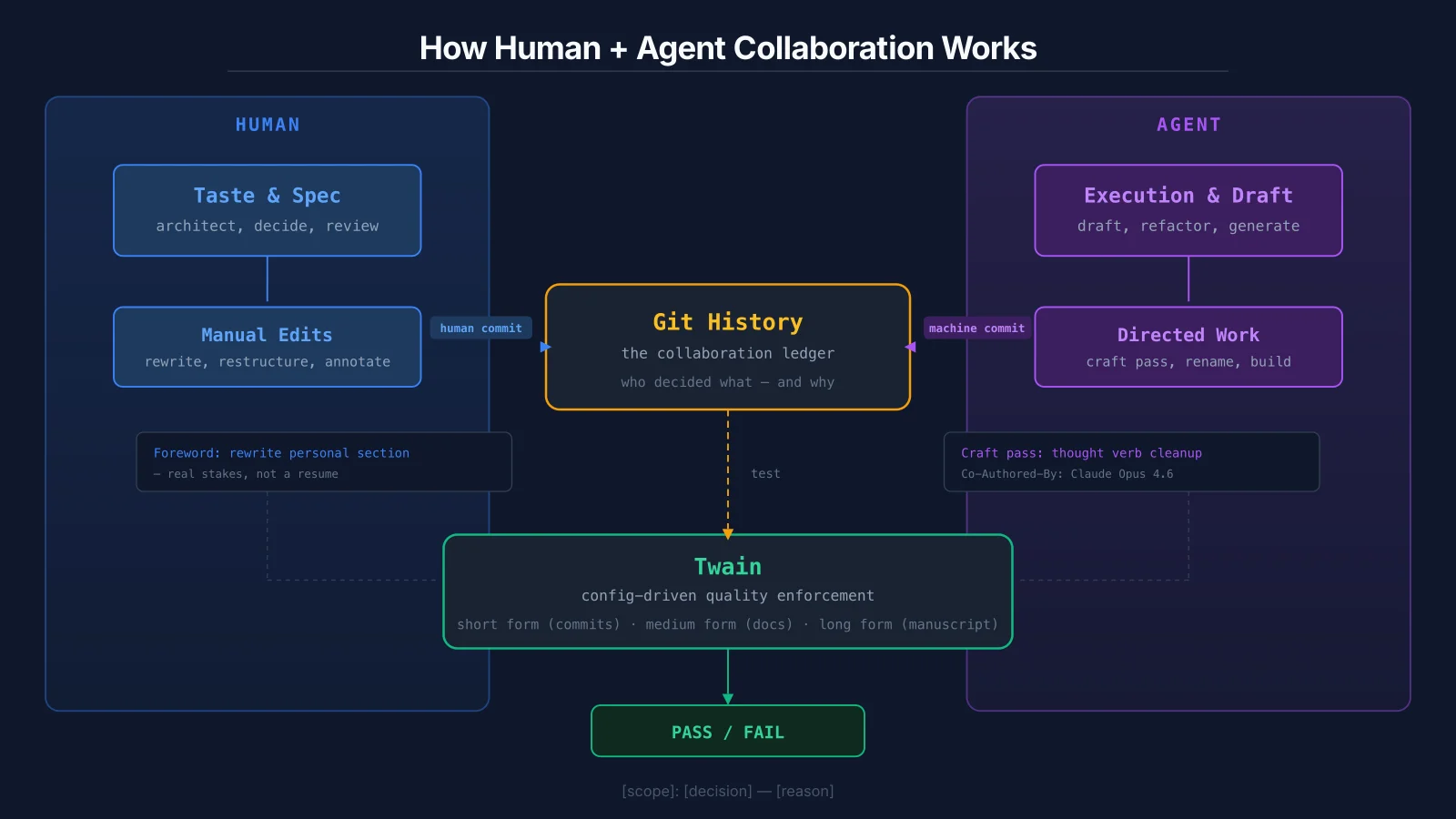

Git History Is the New Performance Review

When humans and AI agents collaborate on the same artifacts, the output doesn't carry fingerprints. The commit log does. I'm building tooling to enforce quality across every form of writing I produce — from commit messages to blog posts to a full manuscript.

Why I Built My Own Accounting Software

QuickBooks is designed for millions of businesses. Mine has one user, one company, and an AI operator who reads every transaction. That changes everything about how accounting software should work.